Every call hides patterns. Speech analytics 1 turns those patterns into actions that fix problems and grow results.

Speech analytics converts call audio into text and labels. It mines topics, intent, sentiment, silence, and compliance signals so I can coach faster, automate scoring, and improve customer experience.

The value comes from doing the basics well: accurate transcription, clear categories, and careful governance. Below I explain how I mine topics, sentiment, and compliance; which use cases pay back first; when to choose real-time vs post-call; and how I secure transcripts and PII.

How do I mine topics, sentiment, and compliance?

Good models help, but method beats magic. Clear rules and clean labels make insights stable and repeatable.

I tag calls with a simple taxonomy, combine keyword spots with intent models, layer sentiment and silence metrics, and add compliance checks with strict thresholds and audits.

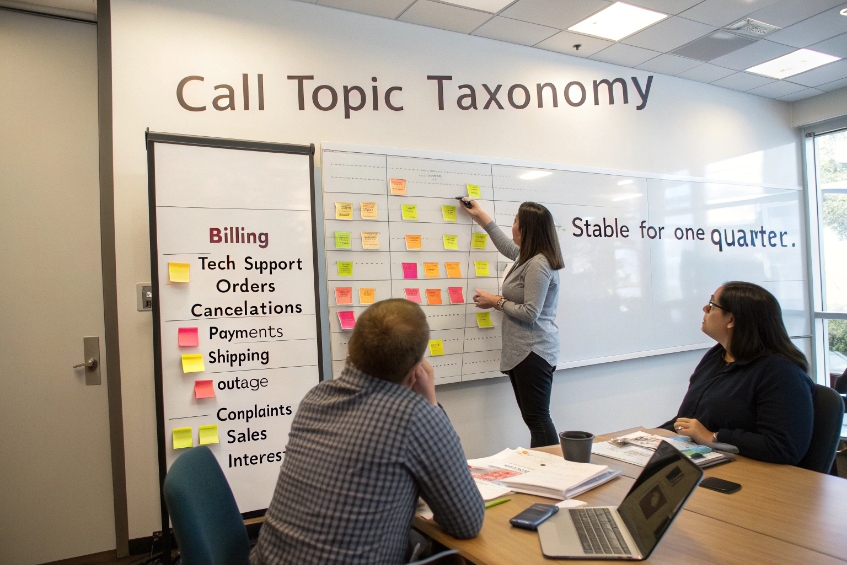

Build a lightweight taxonomy first

I start with 25–50 business topics, not 500. Each topic has a short name, a few sample phrases, and a success definition. Example families: Billing, Tech Support, Orders, Cancellations, Payments, Shipping, Outage, Complaints, Sales Interest. I keep it stable for a quarter so trend lines are real.

Mix pattern types for accuracy

- Keyword/Phrase spotting: Fast and explainable. I include variants and common misspeaks.

- Intent/NLU classification: Catches natural language that does not match exact phrases. I use it where wording varies a lot.

- Acoustic cues: Silence, overlap, escalation words, upset tone markers, and fast/slow speech. These are helpful context, never the only truth.

Sentiment the simple way

In call center sentiment analysis 2, I track beginning, middle, and end sentiment, not just one score. A call that moves from negative → neutral or positive is a save. I also capture specific empathy markers (“sorry,” “I can help,” “let me check”) and interruptions. This gives a clear coaching line: fewer interruptions, more clear empathic phrases, better endings.

Compliance as a checklist, not a guess

I set binary rules for disclosures and required phrases. For example:

- Recording notice within the first 15 seconds.

- Identity verification before account actions.

- Payment handling: pause/resume on card digits; no card data in transcript.

- Opt-out handling: “STOP” or “Do not call” captured and synced.

Each rule has a high-confidence phrase list, a time window, and a fallback human check for borderline scores. I never auto-fail an agent on a 60% match.

A small structure that works

| Layer | What it does | Output |

|---|---|---|

| ASR | Transcribes speech | Words + timestamps |

| Spotting | Finds phrases | Hits with timecodes |

| NLU | Classifies intent | Topic label + confidence |

| Sentiment | Scores segments | Start/Mid/End scores |

| Metrics | Measures flow | Silence %, overlap, talk ratio |

| Compliance | Checks rules | Pass/Fail + evidence |

With this stack, I can explain every tag and fix errors fast.

Which use cases deliver ROI first for me?

Chase the problems that waste minutes or create risk. Wins show up in AHT, FCR, CSAT, and rework.

Automated QA, post-call summaries, churn/save detection, and root-cause heatmaps pay back first. They cut handle time, reduce repeats, and catch risk without hiring more QA. Modern AI-powered speech analytics platforms 3 make these use cases easier to deploy.

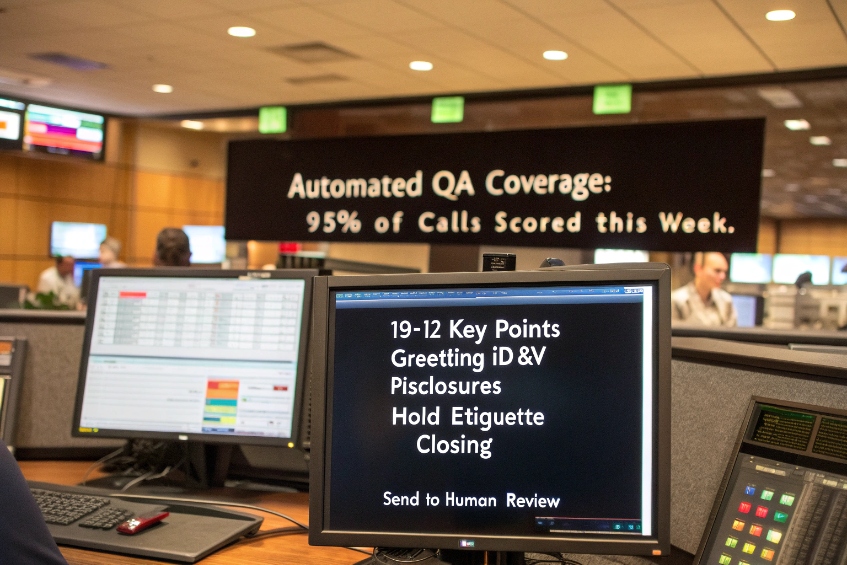

1) Automated QA coverage (80–100% of calls)

Manual QA touches a few calls per agent. Automated quality assurance for calls 4 lets speech analytics score every call on basic items: greeting, ID&V steps, compliance lines, hold etiquette, and call closure. I keep the rubric short (10–12 items) and add a human review for any flagged misses or high-impact errors. This widens coverage and makes coaching fair. Time saved goes straight back to supervisors.

2) Post-call summaries and disposition assist

Auto-summaries shave 30–60 seconds per call of after-call work. The model pulls reason, steps taken, and next action into a short note. Agents edit and submit. Dispositions auto-fill from the same topic tags. This improves data quality and removes the slow, error-prone bits at the end of calls.

3) Churn/save detection in cancellations and complaints

I flag cancel intent, competitor mentions, and save phrase usage. Leaders see which save offers work and which do not. Agents get a checklist: verify value, offer credit where policy allows, and schedule follow-ups. Fewer repeats and fewer losses follow.

4) Root-cause and journey heatmaps

Top drivers with trend lines point to broken processes. If “wrong bill amount” rises 40% after a pricing change, I know where to look. If “password reset” spawns a spike in repeat calls within 7 days, the reset flow likely fails. Fix the process and the voice queue quiets down.

5) Risk and compliance monitoring

Automated checks catch missing recording notices, unmasked card digits, and debt collection script errors. I route high-risk calls to a compliance review queue daily. This reduces fines and cleans up training gaps.

Expected impact snapshot

| Use case | Typical effect | Why it works |

|---|---|---|

| Automated QA | 10× coverage, faster coaching | Machines flag, humans judge |

| Auto-summary | −30–60s ACW | Less typing, better notes |

| Save detection | Higher FCR/retention | Better scripting and timing |

| Root-cause | Fewer repeats, lower AHT | Process fixes > agent heroics |

| Compliance checks | Lower risk/cost | Early detection, clear evidence |

Start with two of these. Prove lift in four weeks. Then add more.

Do I need real-time or post-call analytics?

Use real-time when words during the call change the outcome. Use post-call when trends and coaching matter more. This real-time vs post-call speech analytics 5 split keeps designs simple.

Real-time powers on-call prompts, next-best actions, and supervisor alerts. Post-call powers QA, summaries, trends, and root-cause. Many teams run both with clear scopes.

Real-time: when immediacy pays

- Agent assist: Show steps, scripts, or knowledge snippets as the customer mentions keywords (“cancel,” “refund,” “no internet”).

- Risk nudges: Prompt for recording disclosure or verify identity if the call flows past the ideal window.

- Behavior cues: Gentle hints like “slow down,” “let them finish,” or “acknowledge emotion.” Keep these rare and respectful.

- Supervisor alerts: For threats, self-harm mentions, or VIP escalations.

Pros: Saves calls in the moment, standardizes rare scenarios.

Cons: Needs low latency, good ASR on noisy lines, and careful UI so agents are not overwhelmed.

Post-call: the stable backbone

- Full QA and compliance with evidence links and timecodes.

- Summaries and dispositions with human approval.

- Trend analysis: topics, repeat drivers, policy impact, and training needs.

- Model tuning: quality checks on transcripts, confusion terms, accent gaps.

Pros: Highest accuracy, lower compute cost, easier to audit.

Cons: No in-call save power.

A simple chooser

| Need | Pick | Why |

|---|---|---|

| Save risky sales/retention calls live | Real-time | Prompts change outcomes |

| Audit and coach at scale | Post-call | Accuracy and coverage |

| Lower ACW | Post-call (summaries) | No latency stress |

| Compliance guardrails | Both | Real-time nudge + post-call proof |

Hybrid plan that works

Start with post-call for coverage and ROI. Add real-time only for two scenarios: cancellations and payments/PII handling. Measure conversion, FCR, and compliance misses. If the lift is clear, expand to more intents.

How do I secure transcripts and PII data?

Transcripts hold sensitive details. I protect them like production data: least privilege, short retention, and strong redaction.

Mask PII at capture, encrypt in transit and at rest, limit who sees full text, and keep retention short. Follow PCI DSS call recording requirements 6 when handling payment data. Log every access and build a clear export/delete process.

Redaction at the edge

- Live redaction: Pause/resume or tone-mask during card numbers, CVV, SSN, bank details, and passwords.

- Post-redaction: If live masking fails, run a PII detector on transcripts and audio. Replace digits with tokens (e.g.,

****-****-****-1234) and clip audio regions.

For a broader view of PII redaction in call centers 7, I align internal policies with vendor capabilities.

Data minimization and retention

- Keep less: Store transcripts only as long as policy demands. Common windows: 90–180 days for coaching; longer only where law or contracts require.

- Scope by need: Do not transcribe calls that are out of scope (e.g., HR or legal privileged lines), or route them to a safe queue with recording off.

Access control and encryption

- SSO/MFA for all analytics tools.

- Role-based access: Agents see only their calls; supervisors see team calls; auditors see evidence views with PII masked by default.

- Field-level masking: Hide card, bank, SSN, DOB, and email by default in UI and exports.

- Encryption: TLS in transit; strong encryption at rest for audio and text.

Auditability and subject rights

- Immutable logs: Who viewed what, when, and why.

- Export/delete flows: If a customer asks for deletion or export (per local law), I can find and act fast.

- Vendor due diligence: DPAs, subprocessor lists, data location, and breach SLAs.

Environment and model hygiene

- Separate environments for dev/test/prod. Use masked data in test.

- Model training: If I train custom models, I strip PII and rebalance samples to avoid bias.

- Monitoring: Alerts for large exports, unusual access, or failed redaction jobs.

A short policy I publish to the team

- Only capture what we need.

- Mask by default.

- Short retention beats long.

- Prove every access.

- Fix fast and tell the truth if anything goes wrong.

Security is not a feature; it is daily discipline tied to clear rules and simple tools.

Conclusion

Start simple: clear topics, clean labels, and strict redaction. Win early with automated QA, post-call summaries, and root-cause fixes. Add real-time where it changes outcomes, and guard transcripts like crown jewels.

Footnotes

-

High-level overview of speech analytics benefits and common call center use cases. ↩︎ ↩

-

Explains how call center sentiment analysis measures emotions and improves customer experience. ↩︎ ↩

-

Describes AI-powered speech analytics platforms and practical use cases for modern contact centers. ↩︎ ↩

-

Shows how speech analytics powers automated QA programs and contact center quality monitoring. ↩︎ ↩

-

Compares real-time and post-call speech analytics for different contact center needs. ↩︎ ↩

-

Outlines PCI DSS rules for safely recording and redacting payment card data on calls. ↩︎ ↩

-

Defines PII redaction and why call centers must mask sensitive data in recordings and transcripts. ↩︎ ↩