Cloud VoIP can feel fast, then suddenly jittery and unpredictable. When the host CPU is busy doing “infrastructure work,” your voice packets pay the price.

A DPU is a programmable “infrastructure processor” that sits on the NIC path and offloads networking, storage, and security tasks from the host CPU. It runs its own software stack and accelerators so application cores stay focused on real workloads.

How a DPU works under the hood

A DPU is “infrastructure on a card”

A Data Processing Unit (DPU) 1 is not just a faster NIC. It is a separate computing domain that lives on the I/O edge of a server. Most DPUs combine three building blocks: a general-purpose CPU complex (often Arm cores), high-speed NIC ports, and hardware accelerators for packet processing, crypto, and storage. This mix lets the DPU run its own OS and services while it moves traffic at line rate.

The key idea is simple. A normal server burns host CPU cycles on work that is not your application. It runs vSwitch datapaths, firewall rules, encryption, overlay networks, storage protocols, and telemetry. Those tasks can become the bottleneck, especially when you have many tenants or many small packets. DPUs move those tasks closer to the wire and keep them off the host.

What “offload” really means

Offload can be fixed-function (like checksum) or programmable (like a distributed firewall). A DPU can terminate tunnels, enforce ACLs, and run virtual switching without waking host cores for every packet. It can also isolate infrastructure from tenant workloads. This matters in multi-tenant cloud VoIP, where you want the SBC and media services to be protected from noisy neighbors.

Why DPUs reduce tail latency

VoIP cares less about average latency and more about tail latency 2. When a server is busy with interrupts, context switches, and software datapaths, a small percentage of RTP packets arrive late. That creates jitter buffer growth, audio drops, and “robot voice.” A DPU reduces that by keeping the datapath consistent. It also reduces the number of host interrupts and kernel transitions.

| DPU building block | What it includes | What it offloads | Why VoIP teams care |

|---|---|---|---|

| Onboard CPU | Arm cores + memory | Control plane services | Keeps infrastructure isolated |

| NIC + datapath | 25/100/200/400G ports | vSwitch, overlays, SR-IOV | Faster packet steering |

| Accelerators | Crypto, regex, storage | IPsec/TLS, firewall, NVMe-oF | Lower CPU load, lower jitter |

| Secure boot chain | Signed firmware, attestation | Root of trust | Stronger tenant isolation |

A DPU is most valuable when the “hidden infrastructure tax” is large. That happens in NFV, 5G cores, multi-tenant Kubernetes, and VoIP platforms with heavy encryption and policy enforcement.

If that baseline makes sense, the next step is clearing up the naming confusion. Many teams mix DPU, CPU, GPU, and SmartNIC in one bucket.

Transitioning from definitions to decisions is where most projects win or fail.

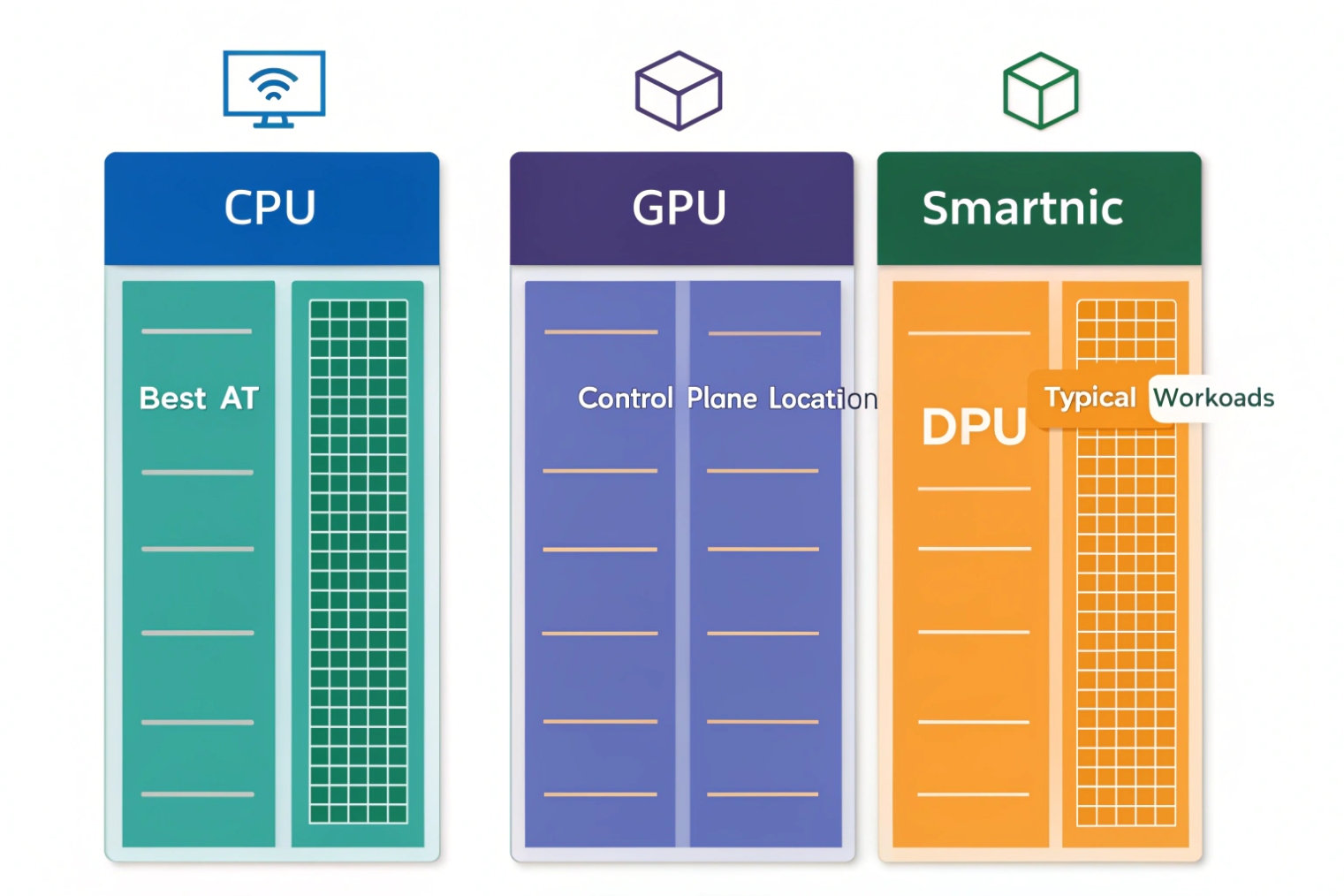

How does a DPU differ from CPU, GPU, and SmartNIC?

Teams often buy hardware for peak throughput, then discover the real bottleneck is the datapath and isolation model. Choosing the wrong class of processor leads to wasted budget.

A CPU runs general workloads, a GPU accelerates parallel compute, a SmartNIC offloads some network functions, and a DPU combines onboard CPUs plus accelerators to run full infrastructure services directly in the I/O path.

CPU: flexible, but expensive for datapaths

The host CPU is the most flexible compute resource. It is also the worst place to spend cycles on repetitive packet work when scale grows. Software vSwitch, encryption, and overlay processing can consume cores that should run your VoIP apps. CPU scheduling also introduces jitter under load.

GPU: not the right tool for packet plumbing

GPUs excel at massively parallel math. They are great for AI inference, video analytics, and media transcoding in the right pipeline. They are not a natural fit for line-rate packet steering, vSwitch enforcement, or per-flow security policies at the NIC edge.

SmartNIC: useful, but often limited control plane

SmartNIC is a broad term. Many programmable network interface cards (SmartNICs) 3 provide programmable pipelines and offloads. Still, the typical difference is that a “DPU-class” device includes enough onboard CPU and memory to host infrastructure services as a first-class control plane. It can behave like a separate host for networking and security functions, not just a helper.

DPU: the infrastructure endpoint

A DPU acts as the infrastructure endpoint for the server. That means you can run a virtual switch, distributed firewall, encryption, and telemetry on the DPU. The host sees a simplified interface. This can reduce blast radius in a compromise and can simplify multi-tenant isolation.

| Component | Primary job | Best at | Weak at |

|---|---|---|---|

| CPU | General compute | PBX logic, SIP routing, databases | Line-rate packet processing at scale |

| GPU | Parallel compute | AI, video, heavy DSP batches | Stateful network policy enforcement |

| SmartNIC | Network acceleration | Offloads, steering, some pipelines | Full control plane isolation (varies) |

| DPU | Infrastructure offload + isolation | vSwitch, security, storage services | Replacing app CPUs for business logic |

For cloud-native VoIP, the question is not “is a DPU faster.” The question is “does moving infrastructure off the host remove jitter and free cores in a measurable way.”

Once the differences are clear, it becomes easier to list which workloads DPUs actually offload in the real world.

What workloads do DPUs offload: networking, storage, and security?

Most platforms think of offload as “some NIC features.” A DPU is broader. It is a place to run the infrastructure stack.

DPUs offload networking datapaths, virtualization switching, encryption and firewalling, and storage I/O services. The goal is to free host cores, reduce interrupts, and enforce policy in the I/O path.

Networking offloads that matter at scale

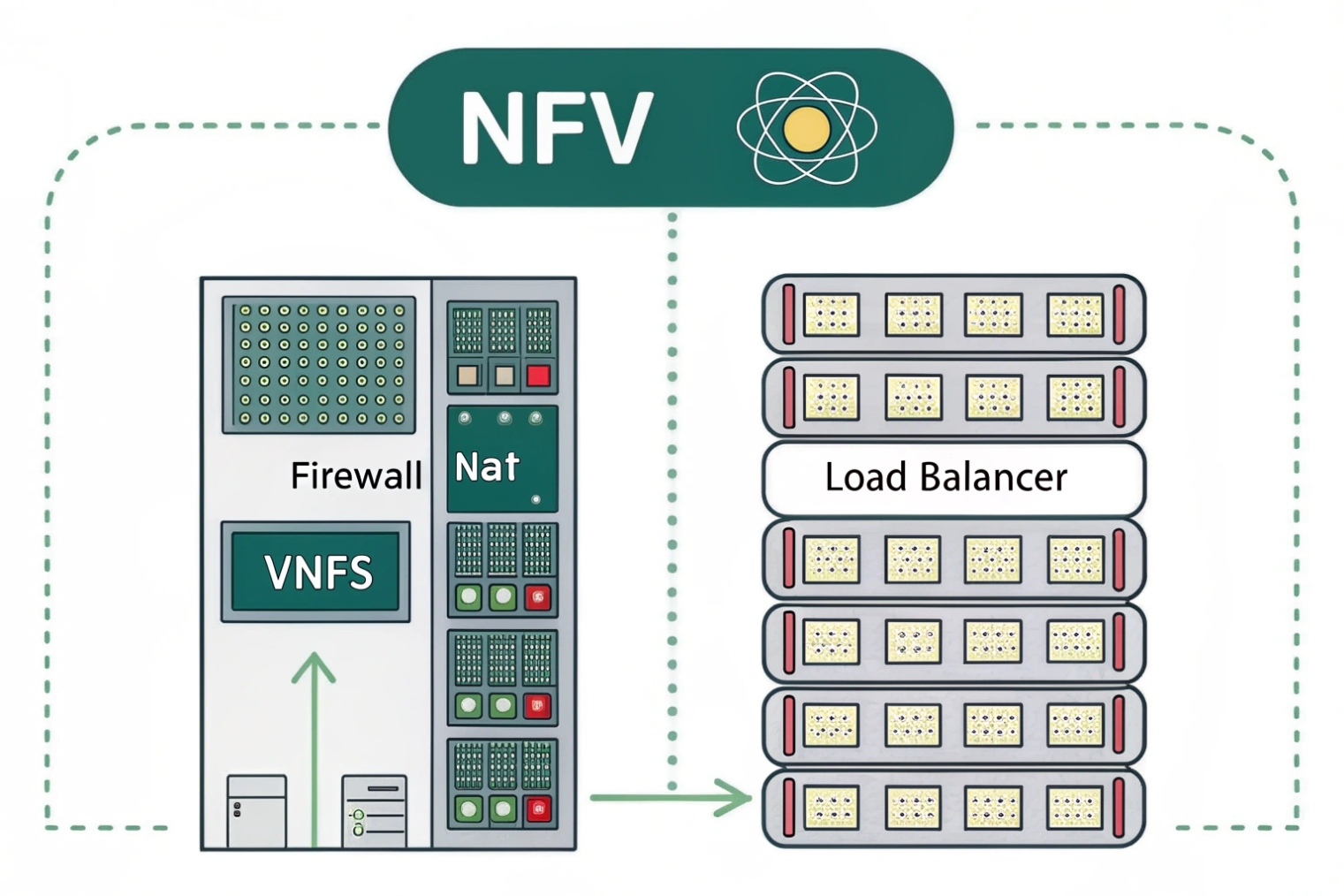

Networking is the first reason DPUs exist. Common offloads include overlay tunnels, virtual switching, SR-IOV steering, and service chaining. In Kubernetes and NFV, datapath overhead comes from encapsulation, iptables rules, and vSwitch processing. A DPU can run these closer to the wire and keep them consistent under load.

For VoIP, the networking story is mostly about consistency. RTP streams are many small packets. They are sensitive to queueing and CPU contention. When the host is doing overlay and policy processing, RTP can experience microbursts and tail latency spikes.

Storage offloads that reduce host overhead

Storage offload matters when you run stateful services at scale, like call recordings, voicemail, and analytics pipelines. DPUs can accelerate storage protocols and reduce CPU cost of I/O. This is more important in platforms that push high IOPS or use remote storage fabrics.

Security offloads that improve isolation

Security is the second big reason to deploy DPUs. Encryption and firewall enforcement at the DPU layer can reduce CPU cost and reduce risk. A DPU can enforce distributed firewalls, microsegmentation, and zero-trust policies before traffic reaches host memory.

For cloud VoIP, this is useful when you run multi-tenant SBCs, media relays, and signaling gateways. It lets the infrastructure layer enforce policy even if a tenant workload is compromised.

| Offload category | Examples | Benefit | VoIP tie-in |

|---|---|---|---|

| Networking | vSwitch, overlay, SR-IOV, QoS | Lower CPU, lower jitter | More stable RTP delivery |

| Security | IPsec/TLS, firewall, IDS hooks | Stronger isolation, faster crypto | SRTP/TLS at scale |

| Storage | NVMe-oF, IO virtualization | Higher IOPS, fewer host cycles | Recording and analytics pipelines |

| Telemetry | Flow logs, counters | Better visibility | Faster root-cause on jitter |

A DPU is not mandatory for every VoIP deployment. It shines when infrastructure tasks are the dominant cost and when jitter is caused by host contention.

That leads to the buying question. DPUs cost money, so the decision must tie to measurable outcomes.

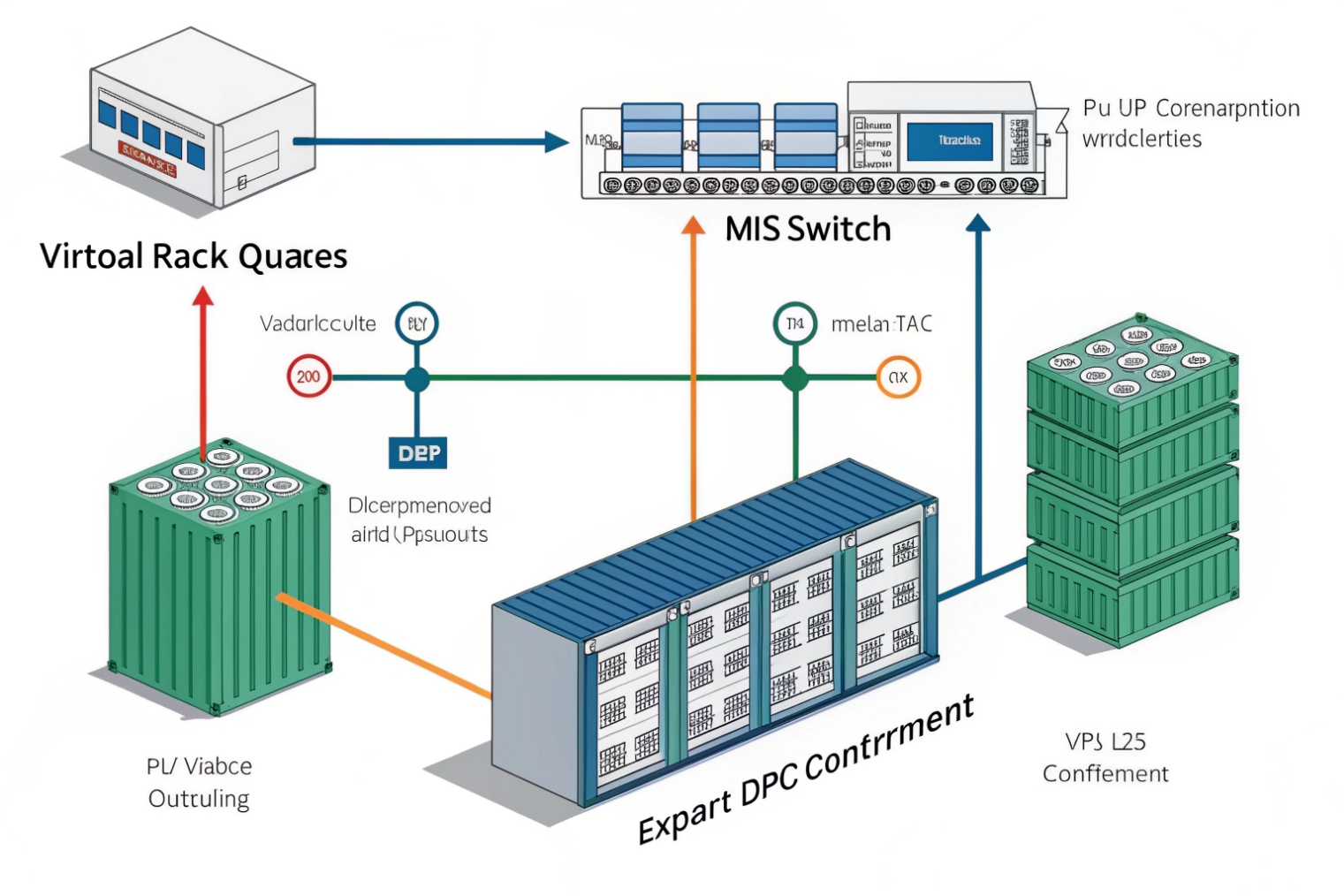

Should I deploy DPUs for NFV, 5G core, or cloud-native VoIP?

A DPU can look like a silver bullet. It is not. It is a tool that fits some architectures perfectly and others poorly.

DPUs make sense when packet processing, isolation, and encryption are the limiting factors, like NFV datapaths and 5G user-plane workloads. For cloud-native VoIP, they pay off mainly in multi-tenant SBC/media platforms or heavy security and observability environments.

NFV: often a strong match

Network Functions Virtualization (NFV) 4 stacks run vSwitches, overlays, and virtual appliances. They also push high packet rates and many flows. In these environments, the host CPU can spend a large slice of time just moving packets. A DPU can offload vSwitch and security services and return cores to VNFs or CNFs.

5G core: strongest in the user plane

In 5G, the user plane can be extremely packet heavy. It also demands low latency and predictable performance. DPUs can help by accelerating datapaths, offloading encryption, and improving tail latency. In many designs, the DPU becomes part of the secure edge for traffic entering the server.

Cloud-native VoIP: depends on what hurts today

VoIP workloads split into signaling and media:

- SIP signaling is not always heavy, but it is sensitive to latency spikes.

- Media relays and RTP handling can become packet-rate heavy, especially with SRTP and many calls.

DPUs can help when the platform is:

- Multi-tenant and needs strong isolation

- Running large east-west traffic across overlays

- Encrypting everything (TLS + SRTP) at high scale

- Struggling with jitter due to host CPU contention

DPUs will not help much if your real bottleneck is:

- Bad WAN links and packet loss

- Poor Wi-Fi

- Wrong QoS policy upstream

- Underpowered SBC application logic

A short story from a past lab build can help. In one test setup, a cloud VoIP stack looked fine until load tests hit peak. Jitter spikes appeared even though average CPU was “not that high.” The real issue was vSwitch and overlay overhead creating tail latency. Moving part of the datapath off-host reduced the spikes. The story details can be replaced later, but the lesson stays: measure tail latency, not only average CPU.

| Scenario | DPU ROI likelihood | Why |

|---|---|---|

| NFV vSwitch-heavy nodes | High | Datapath offload frees many cores |

| 5G UPF and edge packet cores | High | Packet-rate and tail latency dominate |

| Multi-tenant VoIP platform | Medium to high | Isolation + crypto + datapath stability |

| Single PBX in one office | Low | Network edges, not host datapath, usually dominate |

| Small intercom controller node | Low | Simpler traffic patterns |

If the decision is “yes,” the next problem becomes operational. DPUs must be sized, programmed, and monitored like a separate infrastructure layer.

That is where many Kubernetes teams struggle, because they treat DPUs like regular NICs.

How do I size, program, and monitor DPUs in Kubernetes?

A DPU deployment is a platform decision. It changes networking, security, and observability paths. If it is done casually, it becomes hard to debug.

In Kubernetes, DPU sizing starts with packet rate, flow count, and crypto needs. Programming typically uses vendor SDKs, SR-IOV, or offload-aware CNIs. Monitoring must include both host and DPU counters so you can prove where jitter and drops occur.

Sizing: think in packets, not only in gigabits

VoIP and NFV are often packet-rate limited. Small RTP packets can overwhelm a datapath before bandwidth is full. Sizing should include:

- Expected peak packets per second (pps)

- Number of flows and policy rules (ACLs, microsegmentation)

- Encryption load (TLS handshakes, SRTP sessions)

- Overlay and service mesh overhead

- Required telemetry depth (flow logs can be expensive)

For VoIP nodes, also include:

- Peak concurrent calls per node

- SRTP on/off mix

- Media relay features (DTMF relay, transcoding, recording taps)

A practical sizing approach is to run a baseline load test, then re-run with infrastructure features enabled (policy, logging, encryption). The gap shows your “infrastructure tax.” That tax is what a DPU can remove.

Programming: treat the DPU as a separate platform plane

Different vendors expose different tooling, but the operational pattern is similar:

- Use Single Root I/O Virtualization (SR-IOV) 5 or passthrough for deterministic datapaths.

- Use an offload-aware Container Network Interface (CNI) 6 if you want policy enforcement on the DPU path.

- Keep version control for DPU firmware and DPU OS images.

- Automate provisioning like any other node pool.

I prefer a simple rule: keep application pods unaware of the DPU at first. Offload the infrastructure layer without changing the app. Then move to deeper integrations only after you prove the baseline benefit.

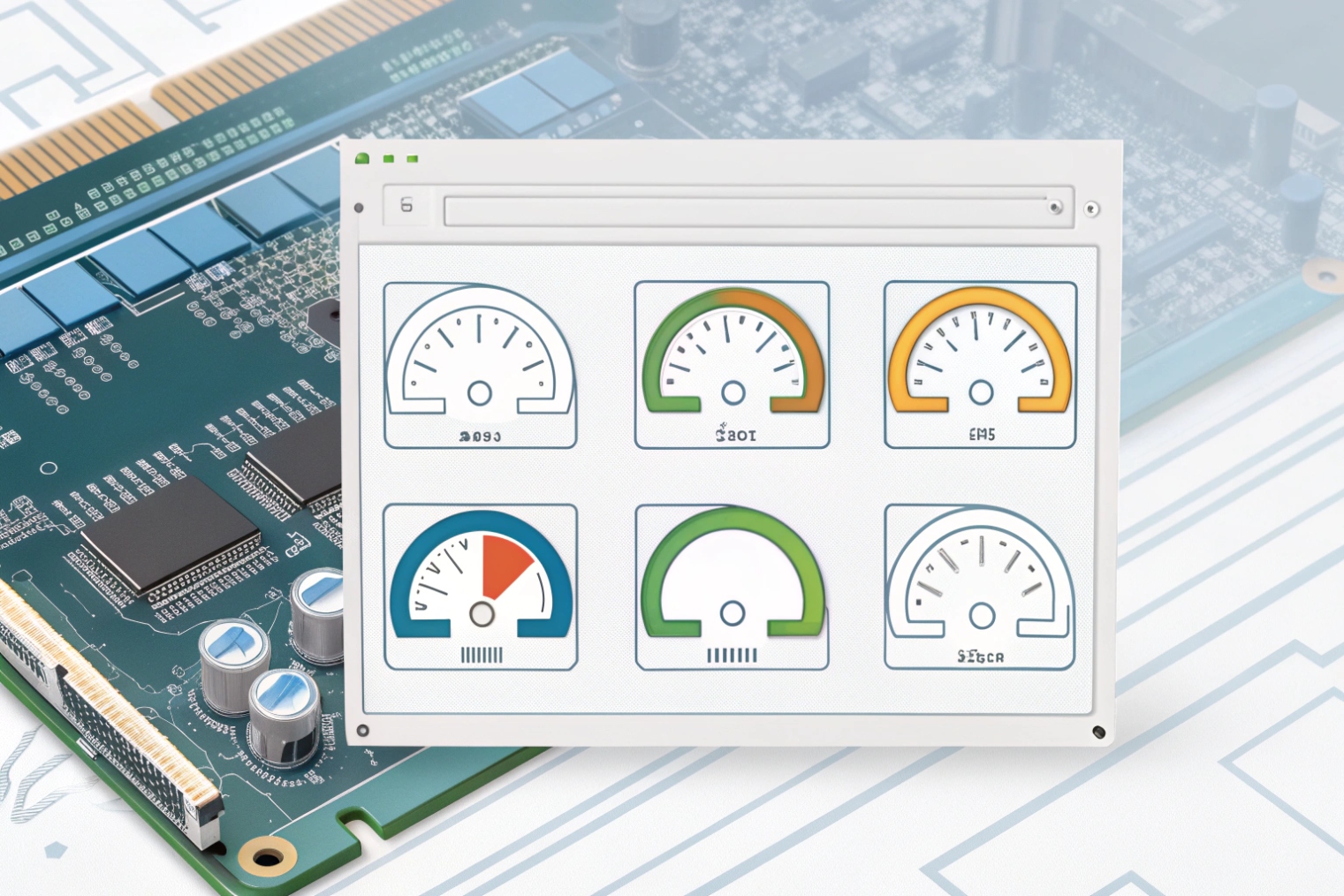

Monitoring: you need visibility on both sides of the PCIe boundary

If RTP jitter increases, the cause might be:

- Host CPU scheduling

- Host vSwitch queues

- DPU queues

- Physical NIC congestion

- Upstream fabric microbursts

So the monitoring plan should include:

- Host: CPU steal, softirq, network stack drops, queue lengths

- DPU: port counters, drops per queue, tunnel/ACL stats, crypto utilization

- App: RTP jitter, packet loss, RTCP reports, MOS estimates

A clean operational setup exports DPU metrics into the same Prometheus metrics pipeline 7 used for the cluster. Then you can correlate “RTP jitter spikes” with “queue drops on DPU egress” or “host softirq saturation.”

| Kubernetes area | What to implement | What to watch |

|---|---|---|

| Networking | SR-IOV, offload-capable CNI | pps, queue drops, latency |

| Security | DPU-side firewall/microseg | rule scale, hit counts, CPU on DPU |

| Storage | DPU IO virtualization (if used) | IOPS, latency, timeouts |

| Observability | Export counters, flow logs | overhead vs visibility balance |

| Lifecycle | Firmware + OS management | drift, rollback safety |

When this is done well, the DPU becomes a stable infrastructure layer, and your SIP and RTP workloads see a calmer host CPU and fewer tail-latency spikes.

Conclusion

A DPU is an infrastructure processor that offloads networking, storage, and security from host CPUs. It helps most in NFV, 5G, and large VoIP platforms where tail latency and isolation matter.

Footnotes

-

Practical overview of DPU architecture, offloads, and where DPUs sit in the server I/O path. ↩ ↩

-

Explains tail latency and why worst-case delays matter more than averages for real-time traffic. ↩ ↩

-

Definitions and examples of SmartNIC capabilities, programmability, and common offload patterns. ↩ ↩

-

ETSI’s NFV materials help map NFV terms (VNFs/CNFs, vSwitch, service chains) to real deployments. ↩ ↩

-

Background on SR-IOV and how it provides low-overhead, direct device access for virtualized workloads. ↩ ↩

-

CNI spec explains how Kubernetes networking plugins integrate, which matters for offload-aware datapaths. ↩ ↩

-

Prometheus overview shows how to export, scrape, and query metrics for correlating jitter with infrastructure counters. ↩ ↩